"Our tailored course provided a well rounded introduction and also covered some intermediate level topics that we needed to know. Clive gave us some best practice ideas and tips to take away. Fast paced but the instructor never lost any of the delegates"

Brian Leek, Data Analyst, May 2022

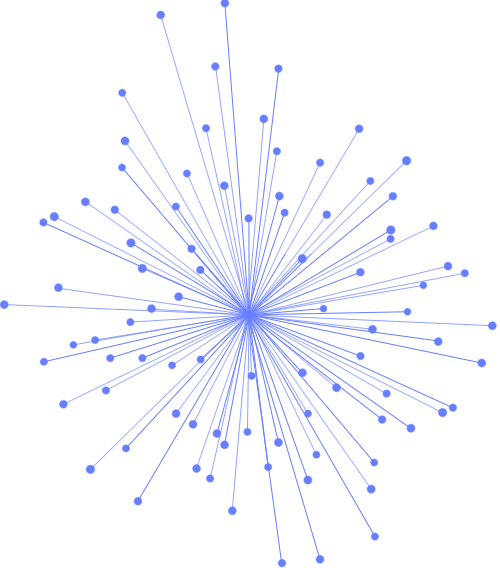

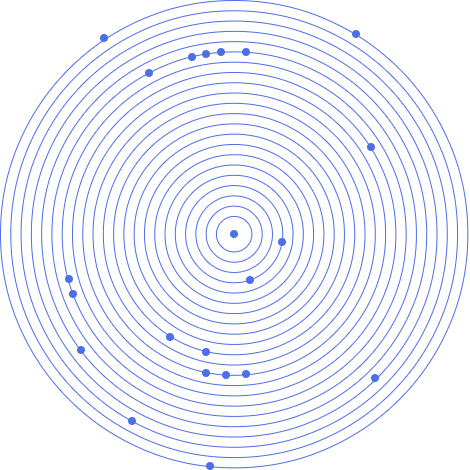

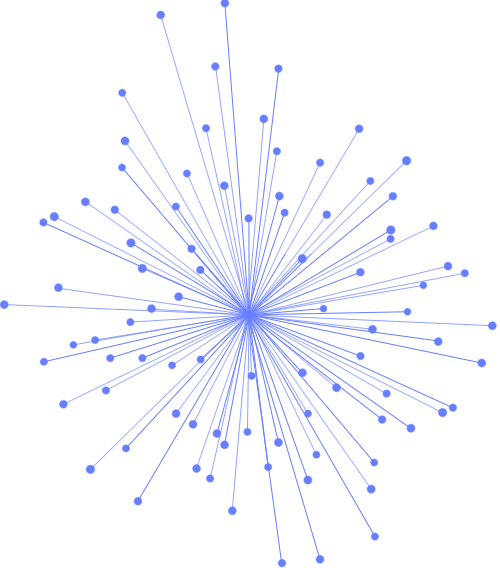

IT professionals looking to learn about how to architect and administer Apache Hadoop and clusters for Big Data

"Our tailored course provided a well rounded introduction and also covered some intermediate level topics that we needed to know. Clive gave us some best practice ideas and tips to take away. Fast paced but the instructor never lost any of the delegates"

Brian Leek, Data Analyst, May 2022

“JBI did a great job of customizing their syllabus to suit our business needs and also bringing our team up to speed on the current best practices. Our teams varied widely in terms of experience and the Instructor handled this particularly well - very impressive”

Brian F, Team Lead, RBS, Data Analysis Course, 20 April 2022

Sign up for the JBI Training newsletter to receive technology tips directly from our instructors - Analytics, AI, ML, DevOps, Web, Backend and Security.

In this Hadoop architecture and administration training course, you will gain the skills to install, configure and manage the Apache Hadoop platform and its associated ecosystem.

You will learn how to build a Hadoop solution that satisfies your business requirements.

CONTACT

+44 (0)20 8446 7555

Copyright © 2025 JBI Training. All Rights Reserved.

JB International Training Ltd - Company Registration Number: 08458005

Registered Address: Wohl Enterprise Hub, 2B Redbourne Avenue, London, N3 2BS

Modern Slavery Statement & Corporate Policies | Terms & Conditions | Contact Us

POPULAR

AI training courses CoPilot training course

Threat modelling training course Python for data analysts training course

Power BI training course Machine Learning training course

Spring Boot Microservices training course Terraform training course